Filters & Sorting

Filters & Sorting

From Isaac Asimov’s The Three Laws of Robotics to Mary Shelly’s Frankenstein to the television series Star Trek, both Utopian and Dystopian variants of Artificial Intelligence abound in popular culture and science fiction. We think of reasoning, problem-solving, planning, and making judgments as natural forms of our (very human) intelligence. This intelligence is embedded in the AI agents themselves, making them artificially intelligent (as per the name). Proponents of Artificial intelligence boast that these bots will help us with modern medicine, reduce labor costs, and engender the growth of an advanced society. True, we are already aware of the benefits involved in the use of AI. Those who are more discouraging say that the unintended consequences of using AI will result in adverse effects that are detrimental to our society, creating, possibly, a dystopian reality ( Yikes, like the books, really?) Is it really as scary as we think it is? Does it warrant such a scream after all? When we begin to think of how these underlying ethical concerns may affect us in the present moment, we can begin to understand just how dangerous the potential effect of AI really is, and precisely why we need to concern ourselves with ethical guidelines in the first place.

One of the more pressing philosophical concerns regarding AI technology is the threat of job security. If human labor is replaced by this mechanical muscle, how many jobs will be lost to the AI? Will thousands of workers be put on the street? The impact of technological innovation on employment has been a dominant subject in the field of economics since the dawn of, well, the industrial age, and with countless numbers of studies led by researchers on the topic, there is much cause for concern. Why is that, exactly? Generally speaking, any task following well-defined procedures can be completed by automatons. What this means is that automatons may take over our jobs and that low-paying physical wage jobs are at most risk. People need these jobs. According to the 2016 economic report of the president (a report published by the CEA in February of each year), 83% of jobs with an hourly wage below $20, 31% of jobs with an hourly wage between $20 and $40, and 4% of jobs with an hourly wage above $40 were at risk of take-over by automation. The evidence then points to what might be a large-scale wealth inequality in the future, causing major concern...

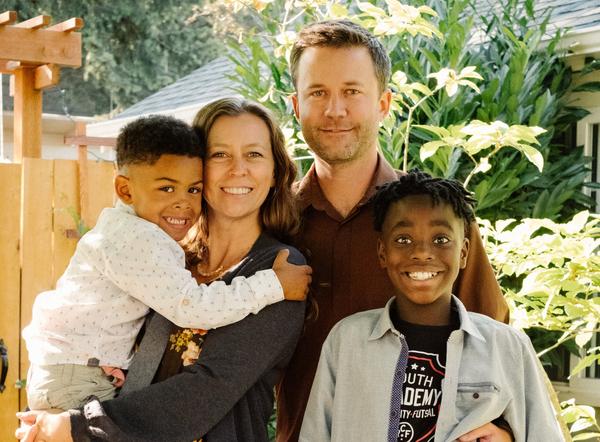

Another pressing ethical concern involves racial and sexual prejudices. The computers have already displayed these prejudices, and as these machines have access to large quantities of data, some of the biases (both racial and sexual) also enter into play. For example, AI are used to shortlist people for advanced positions, and as these roles have been historically dominated by men, the computerized algorithms in the AI reflect this bias when shortlisting candidates. That means they are shortlisting men more then women without, well, thinking. If people don't think, then the question is, are the robots thinking about morality and ethics? This spells trouble for the advancement of a more gender-neutral reality if these robots are only serving to perpetuate backwards ideologies. It also spells trouble racially as AI discriminates in shortlisting white candidates versus black candidates, and they also discriminate among traditionally African names. In both of these cases, AI show an unfair bias against African American and female candidates, hindering the growth and progression of our society, and causing inequality in the workforce.

The last ethical concern in this article pertains to the use of AI in the court system. Risk assessment surveys that detect whether or not a criminal is likely to offend again show a racial bias in the programmed detection system. In these cases, black offenders are more likely to be detected as high-risk candidates ( selected to offend again) as compared to white offenders in the software. These biases and concerns are quite likely a mirror effect, as we are the source of programming (we teach the robots). Our human biases are shown explicitly in the agents. How then may we set out to rectify these issues? That is the question. This is where sound ethical research comes into place, and with more and more money beings invested into ethical research institutions across the globe, the questions are being scrutinized again and again. Still, there is plenty remaining here, and just how will the rise of AI continue to affect mankind in the future?